Apple Patents Triple Lens, Triple Sensor Combo

With one luminance, and two chrominance sensors, image quality should be better

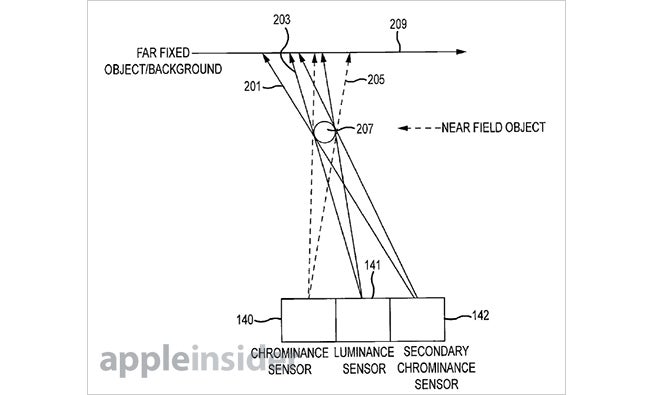

Apple has just been awarded a patent for an interesting take on the imaging system for a camera. Rather than a standard setup of a lens focusing light on a sensor, the patent is for a system of three sensors, one for capturing luminance, flanked by two for capturing chrominance. Each sensor would have its own dedicated lens assembly, to direct light as needed.

This is different from your standard all-in-one sensor in that it would allow each sensor to focus on just one part of the image — luminance and color, ideally then combining them for more accurate images.

The accompanying problem with this would then be that you’re dealing with much smaller sensors than a unified model would have, and the associated issues with image noise and dynamic range would doubtless arise. The design of the three-sensor unit would also have to be built to take into account that one of the lenses might have a blindspot that the others don’t, and to be adjusted to account for that.

Since this is just a patent, there’s no indication that Apple is actually planning on making a sensor array like this anytime soon. But if they did, it would be a very different take on image capturing to what iPhones have used up until now.

[via AppleInsider]